The Accountability Decision

Agents do not fail loudly. That is precisely the problem.

This is the fourth essay in a four-part series on federated metadata governance. The first argued that source governance is a foundation decision requiring system authority. The second argued that the translation layer between domain representations is engineering work, not a modelling exercise. The third named the ongoing obligations of distributed ownership and showed why they are permanent design costs, not temporary friction. This essay asks what changes when agents become the primary consumers of federated metadata, and what governance architecture actually looks like when designed for that reality rather than extended to cover it.

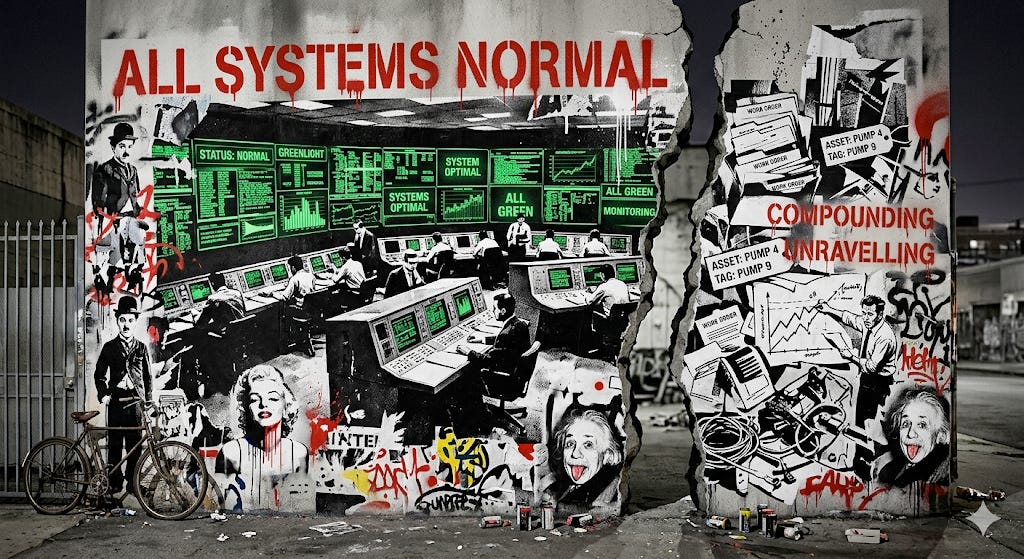

An agent managing maintenance schedules does not produce a report for a human to review. It reads asset status, applies priority logic, and writes updated work orders back into the operational system, continuously, at whatever speed the workflow demands. For several weeks, everything reports green. Work orders are being processed. Queues are clearing. And then field teams begin raising queries: jobs are being assigned to the wrong locations, urgent tasks are being deprioritised, records the agent is writing back are inconsistent with what the source system expected to receive. The investigation traces backwards through weeks of compliant-looking operations. The governance gate passed at every step. The failure was not in the agent. It was in an identifier format that had drifted between two source systems, silently, in a change that passed its own release process without triggering any downstream dependency check.

The specific danger is not that the system failed loudly. It is that it continued to succeed quietly, producing outcomes the business did not recognise as failures until the damage had already compounded. This is silent breakage: governance gates passing, dashboards reporting green, and operational reality quietly diverging from what the business expects.

The previous essay closed with two tests: can you name the person with authority to enforce a shared standard, and can you name the person with authority to change a source system the coordination layer cannot reconcile. Those tests remain necessary. In an agentic environment they are no longer sufficient. Even a named person with genuine authority cannot govern through decision review at machine speed. The accountability model itself must change shape.

Most organisations deploying agentic AI are applying the governance architecture they already have. The intention is sound. The architecture is the wrong shape.

The Feedback Loop Nobody Has Accounted For

Agents cross domain boundaries in sequence, consuming data from each system they pass through and writing outputs back into the same systems that fed their decisions. That traversal pattern is what makes every coordination cost from the previous essays directly operational rather than merely structural.

In a human-centric system, a metadata failure surfaces as an analytical problem: a dashboard shows an unexpected number, an analyst investigates, a ticket is raised. The failure is visible and correctable before it propagates. In an agentic system, the same failure is operationally real and structurally invisible. No alert fires. The governance gate passed. The agent continues acting on the broken state until a human notices that outcomes have stopped making sense.

The deeper problem is what happens in the interval. Agents do not only consume data; they produce it. Every action writes back into the operational systems that feed the next decision. An ungoverned source does not merely produce unreliable inputs. It produces what the first essay called authoritative incoherence, now running not at the level of a dashboard but through every decision the agent makes. It receives unreliable outputs that make the original problem progressively harder to locate, inside systems that are all reporting green.

The first feedback loop runs from agent action back into source systems, compounding the original problem invisibly. The second loop, the one most organisations have not built, runs from agent behaviour back into the governance architecture itself: learning from what agents reveal about where the boundaries were wrong, and adjusting them before the next cycle compounds the gap.

Automated monitoring alone cannot resolve a problem that is architecturally invisible by design.

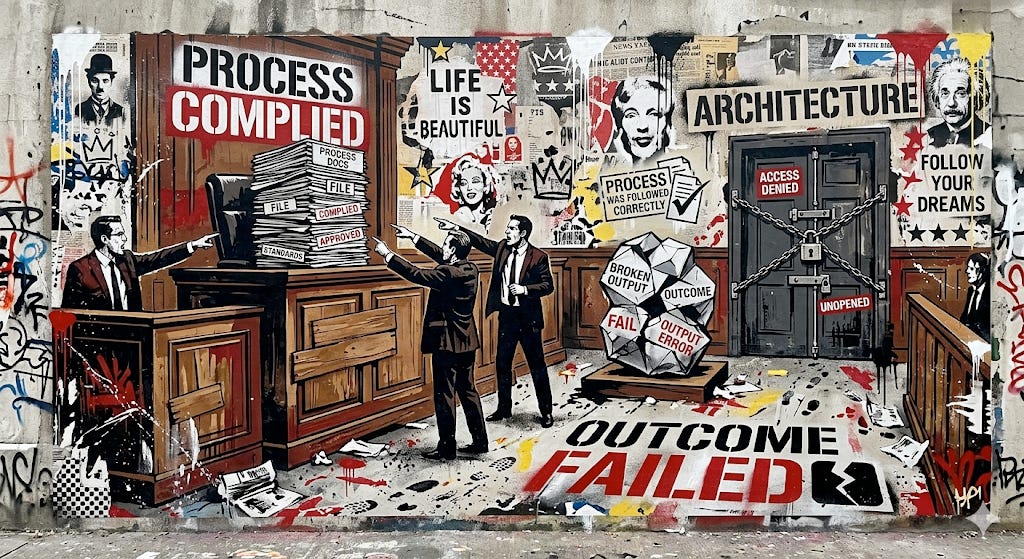

Why Transactional Accountability Is the Wrong Shape

The instinct when deploying agentic systems is to extend existing governance: periodic output reviews, escalation paths, automated monitoring when volume exceeds human capacity. Automated monitoring detects when outputs fall outside expected ranges. It cannot detect the silent breakage pattern, where outputs look correct because no validation rule fired but are operationally wrong because the metadata they were based on changed without notification.

Transactional accountability also builds in an escape route. A reviewer can say the decision looked correct at the time. When something goes wrong, accountability distributes across the chain of approvals until it dissolves. In an agentic system operating at machine speed, that question becomes unanswerable before the failure has finished compounding.

The governance architecture that works for an agentic environment moves accountability from decisions to architecture. It asks not whether the agent made the right decision but whether the organisation built a system whose boundaries make the right decisions likely and the wrong ones detectable.

In practice this means the person who designed the boundaries of what the agent can and cannot do is accountable for every outcome those boundaries produce, in the same way that an architect is accountable for a building’s structural safety regardless of who laid the individual bricks. Architectural accountability removes the escape route entirely. That is precisely why most organisations are reluctant to adopt it and precisely why agentic environments demand it.

What Governance Architecture Designed for Agents Actually Requires

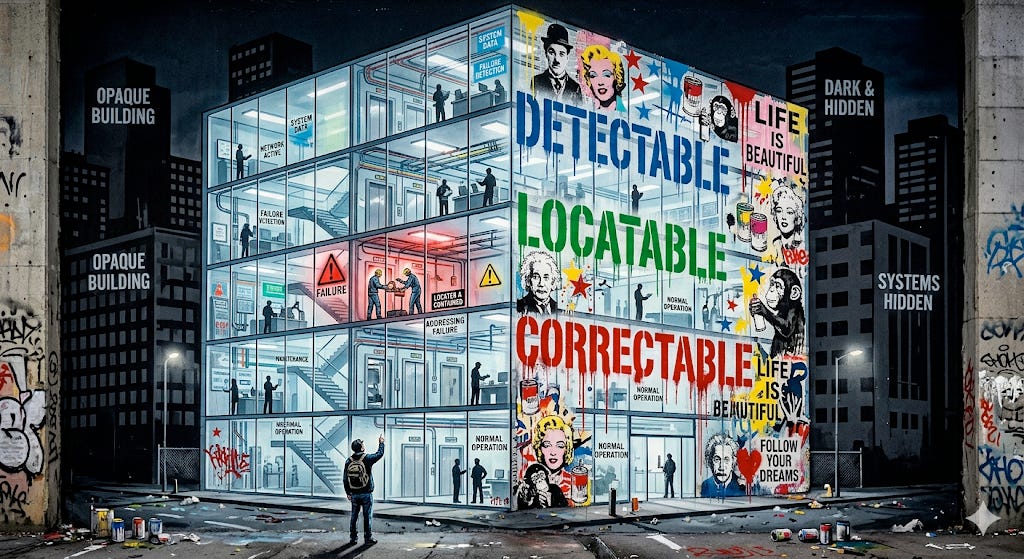

The first requirement is risk-differentiated source governance. The identifiers agents use to traverse systems, the definitions they use to classify and route, the thresholds they use to decide whether to act or escalate: these require source governance applied with the rigour of uptime and security requirements. An agent acting on a drifted identifier does not flag the inconsistency for human review. It acts, at scale, before the inconsistency is visible. The governance investment concentrates where agent action amplifies the consequence.

The second requirement is translation layer integrity maintained under agentic load. Where the previous essay described the versioning and standards obligations as permanent costs, under agentic load those obligations acquire operational urgency. The translation layer is not maintaining mappings between stable representations. It is continuously negotiating between domains whose meaning is always local and contextual. Agents have no capacity to recognise when that negotiation has broken down. A translation that drifts without notification does not produce a contested report. It produces a silent operational failure that compounds through the feedback loop described above.

The third requirement is a learning loop that treats agent behaviour as a governance signal rather than an operational outcome to be managed. Upfront design cannot anticipate every inconsistency agents will surface when traversing real operational systems at real speed. Think of it as a circuit breaker: when agent behaviour signals that the foundation or translation layer has drifted beyond a reliable threshold, the architecture should pause agent write-access rather than allow the compounding to continue while the fault is located and fixed. The organisations that navigate this well treat those surfaced inconsistencies as information about where the governance architecture needs to mature, feeding back into source governance and translation layer maintenance continuously. Without this loop, the architecture drifts from the operational reality it governs until a failure makes the gap visible.

What This Gives the Organisation

An organisation that has made the foundation decision, invested in the translation layer as a service, and funded the coordination obligations honestly gains something specific: failures become detectable before they compound, locatable when they occur, and correctable without tracing backwards through months of compliant-looking operations. For those responsible for deploying or governing agentic capability, that is the difference between a programme that builds confidence over time and one that accumulates anxiety.

Most organisations extending existing governance to cover agents are building toward authoritative incoherence, not at the level of a single source system, but across the full operational stack agents traverse. The sequence this series has argued for is a structural precondition, not a governance improvement programme.

Foundation, then translation, then the honest funding of coordination obligations, then governance architecture designed for the environment agents actually operate in. In that order, or not at all.

Where in your organisation is agent-driven automation already writing back into operational systems, or will be within the next year? What happens to everything downstream if what it writes is wrong for thirty days before anyone notices? That question tends to locate the governance gap more precisely than any maturity framework. I would like to hear what you are finding.

Further reading

The architectural accountability argument draws on The Governance Paradox: Human Oversight at Machine Speed, which examines what governance looks like when decision volume exceeds human review capacity. The platform capability argument is developed in The Data Platform Was Never a Place. Both approach the same underlying question from different angles: what does it mean to govern systems that operate faster than human oversight can follow. The learning loop argument in this essay, that agent behaviour must feed back into governance architecture continuously, connects to the feedback-first organisational design argued in The Entropy Tax: A New Law of Organizational Physics.

An honest endnote

The essay argues for a learning loop that treats agent behaviour as a governance signal, but does not specify what that loop looks like in practice: who owns it, what signals it processes, how frequently it runs, and how its outputs feed back into the source governance and translation layer decisions made in the first two essays, and how it keeps pace with meaning that is always shifting rather than drifting from a stable state. That is a genuine gap. Others are going considerably deeper into agentic architecture and the operational design of agent networks than this series does. Eric Broda’s work on agentic mesh at agenticmesh.substack.com is worth reading for anyone who wants to follow that thread further. This series has chosen to stay at the governance and organisational authority layer rather than the agentic architecture layer, and that is a deliberate boundary, not an oversight. Most organisations deploying agentic systems have not yet designed the feedback loop at all, let alone designed it well. That is where the most important work remains to be done.